Too many testing courses emphasize a superficial knowledge of basic ideas. This makes things easy for novices and reassures some practitioners that they understand the field. However, it’s not deep enough to help students apply what they learn to their day-to-day work.

The BBST® series will attempt to foster a deeper level of learning by giving students more opportunities to practice, discuss, and evaluate what they are learning. The specific learning objectives will vary from course to course (each course will describe its own learning objectives).

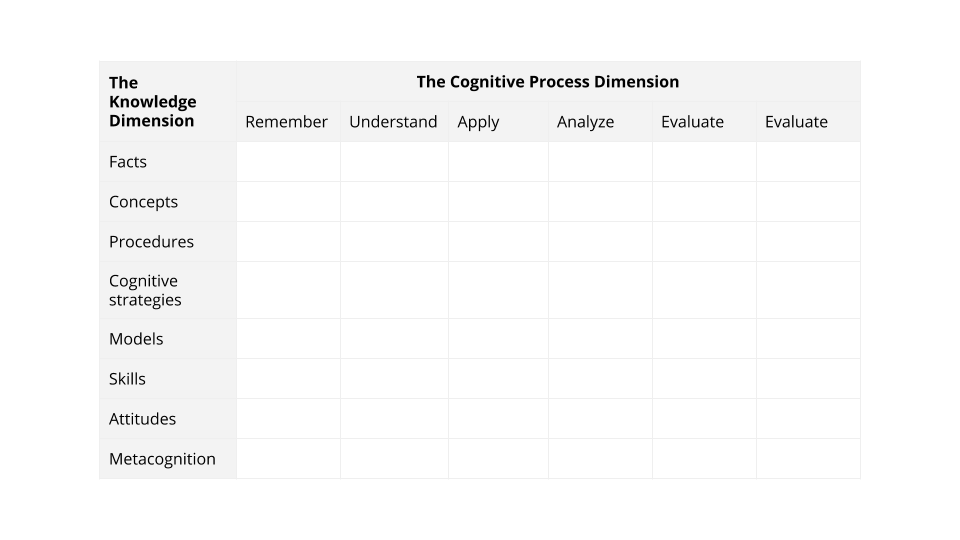

In the individual courses, we will describe our objectives in terms of Anderson & Krathwohl’s update to the Bloom Commission’s Taxonomy of Educational Objectives (1956).

At this point, the most important thing to understand about levels of learning is that teaching material at the lower levels (e.g. remembering, understanding) provides a weak foundation for higher-level work. And giving an exam that checks whether someone can remember or explain a concept tells you little or nothing about whether they can apply it, analyze or evaluate situations in terms of it, or create test materials using it. If you want to foster the use of what is learned at a level of cognitive depth, you have to teach to that level. If you want to find out whether your teaching was successful, you have to examine at that level.

For more on Bloom’s taxonomy, see Wikipedia, Don Clark’s page, or just ask Google for a wealth of useful stuff. For simple summaries of the Anderson / Krathwohl approach, see the Course Development Models page at Oregon State University.

I and Bach have extended Anderson & Krathwohl’s model slightly to highlight a few knowledge categories that apply directly to testers:

The Knowledge Dimension lists different types of things that we learn. The Cognitive Process Dimension considers how deeply we have learned them.

For example, in this model, a concept is a type of knowledge. We can learn that concept at different levels. Imagine someone who memorizes the definition of a concept (can demonstrate that he remembers it). This person might be able to pass a multiple-choice test that asked the person to identify the correct definition of the concept. However, if the person only learned the concept at the Remember level, he wouldn’t be able to explain it in his own words (demonstrate that he understands it) or use the concept to solve a problem (apply it).

Knowledge Dimension

Facts

A “statement of fact” is a statement that can be unambiguously proved true or false. For example, “James Bach was born in 1623” is a statement of fact. (But not true, for the James Bach we know and love.) A fact is the subject of a true statement of fact.

Facts include such things as:

- Tidbits about famous people

- Famous examples (the example might also be relevant to a concept, procedure, skill or attitude)

- Items of knowledge about devices (for example, a description of an interoperability problem between two devices)

Concepts

A concept is a general idea. “Concepts are abstract in that they omit the differences of things in their extension, treating them as if they were identical.” (wikipedia: Concept). In practical terms, we treat the following kinds of things as “concepts” in this taxonomy:

- definitions

- descriptions of relationships between things

- descriptions of contrasts between things

- description of the idea underlying a practice, process, task, heuristic (whatever)

Here’s a distinction that you might find useful. Consider the oracle heuristic, “Compare the behavior of this program with a respected competitor and report a bug if this program’s behavior seems inconsistent with and possibly worse than the competitor’s.”

- If I am merely describing the heuristic, I am giving you a concept.

- If I tell you to make a decision based on this heuristic, I am giving you a rule.

Sometimes, a rule is a concept. A rule is an imperative (“Stop at a red light”) or a causal relationship (“Two plus two yields four”) or a statement of a norm (“Don’t wear undershorts outside of your pants at formal meetings”). The description/definition of the rule is the concept. Applying the rule in a straightforward way is application of a concept. The decision to puzzle through the value or applicability of a rule is in the realm of cognitive strategies. The description of a rule in a formalized way is probably a model.

Procedures

“Procedures” are algorithms. They include a reproducible set of steps for achieving a goal. Consider the task of reporting a bug and imagine that someone has:

- broken this task down into subtasks (simplify the steps, look for more general conditions, write a short descriptive summary, etc.) and

- presented the tasks in a sequential order.

This description is:

- intended as a procedure if the author expects you to do all of the steps in exactly this order every time.

- a cognitive strategy if it is meant to provide a set of ideas to help you think through what you have to do for a given bug, with the understanding that you may do different things in different orders each time, but find this a useful reference point as you go.

Cognitive Strategies

“Cognitive strategies are guiding procedures that students can use to help them complete less-structured tasks such as those in reading comprehension and writing. The concept of cognitive strategies and the research on cognitive strategies represent the third important advance in instruction.

“There are some academic tasks that are “well-structured.” These tasks can be broken down into a fixed sequence of subtasks and steps that consistently lead to the same goal. The steps are concrete and visible. There is a specific, predictable algorithm that can be followed, one that enables students to obtain the same result each time they perform the algorithmic operations. These well-structured tasks are taught by teaching each step of the algorithm to students. The results of the research on teacher effects are particularly relevant in helping us learn how teach students algorithms they can use to complete well-structured tasks.

“In contrast, reading comprehension, writing, and study skills are examples of less- structured tasks — tasks that cannot be broken down into a fixed sequence of subtasks and steps that consistently and unfailingly lead to the goal. Because these tasks are less-structured and difficult, they have also been called higher-level tasks. These types of tasks do not have the fixed sequence that is part of well-structured tasks. One cannot develop algorithms that students can use to complete these tasks.”

From: Barak Rosenshine, Advances in Research on Instruction, Chapter 10 in J.W. Lloyd, E.J. Kameanui, and D. Chard (Eds.) (1997) Issues in educating students with disabilities. Mahwah, N.J.: Lawrence Erlbaum: Pp. 197-221.

In cognitive strategies, we include:

- heuristics (fallible but useful decision rules)

- guidelines (fallible but common descriptions of how to do things)

- good (rather than “best” practices)

The relationship between cognitive strategies and models:

- deciding to apply a model and figuring out how to apply a model involve cognitive strategies

- deciding to create a model and figuring out how to create models to represent or simplify a problem involves cognitive strategies

BUT

- the model itself is a simplified representation of something, done to give you insight into the thing you are modeling.

- We aren’t sure that the distinction between models and the use of them is worthwhile, but it seems natural to us so we’re making it.

Models

A model is:

- a simplified representation created to make something easier to understand, manipulate, or predict some aspects of the modeled object or system.

- an expression of something we don’t understand in terms of something we (think we) understand.

A state-machine representation of a program is a model. Deciding to use a state-machine representation of a program as a vehicle for generating tests is a cognitive strategy. Slavishly following someone’s step-by-step catalog of best practices for generating a state- machine model of a program in order to derive scripted test cases for some fool to follow is a procedure.

This definition of a model is a concept. The assertion that Harry Robinson publishes papers on software testing and models is a statement of fact. Sometimes, a rule is a model.

- A rule is an imperative (“Stop at a red light”) or a causal relationship (“Two plus two yields four”) or a statement of a norm (“Don’t wear undershorts outside of your pants at formal meetings”).

- A description/definition of the rule is probably a concept

- A symbolic or generalized description of a rule is probably a model.

Skills

Skills are things that improve with practice.

- Effective bug report writing is a skill, and includes several other skills.

- Taking a visible failure and varying your test conditions until you find a simpler set of conditions that yields the same failure is skilled work. You get better at this type of thing over time.

Entries into this section will often be triggered by examples (in instructional materials) that demonstrate skilled work, like “Here’s how I use this technique” or “Here’s how I found that bug.” The “here’s how” might be classed as a:

- procedure

- cognitive strategy, or

- skill

In many cases, it would be accurate and useful to class it as both a skill and a cognitive strategy.

Attitudes

“An attitude is a persisting state that modifies an individual’s choices of action.” Robert M. Gagne, Leslie J. Briggs & Walter W. Wager (1992) “Principles of Instructional Design” (4th ed), p. 48.

Attitudes are often based on beliefs (a belief is a proposition that is held as true whether it has been verified true or not). Instructional materials often attempt to influence the student’s attitudes. For example, when we teach students that complete testing is impossible, we might spin the information in different ways to influence student attitudes toward their work:

- given the impossibility, testers must be creative and must actively consider what they can do at each moment that will yield the highest informational return for their project

- given the impossibility, testers must conform to the carefully agreed procedures because these reflect agreements reached among the key stakeholders rather than diverting their time to the infinity of interesting alternatives

Attitudes are extremely controversial in our field and refusal to acknowledge legitimate differences (or even the existence of differences) has been the source of a great deal of ill will.

In general, if we identify an attitude or an attitude-related belief as something to include as an assessable item, we should expect to create questions that:

- define the item without requiring the examinee to agree that it is true or valid

- contrast it with a widely accepted alternative, without requiring the examinee to agree that it is better or preferable to the alternative

- adopt it as the One True View, but with discussion notes that reference the controversy about this belief or attitude and make clear that this item will be accepted for some exams and bounced out of others.

Metacognition

Metacognition refers to the executive process that is involved in such tasks as:

- planning (such as choosing which procedure or cognitive strategy to adopt for a specific task)

- estimating how long it will take (or at least, deciding to estimate and figuring out what skill/procedure/ slave-labor to apply to obtain that information)

- monitoring how well you are applying the procedure or strategy

- remembering a definition or realizing that you don’t remember it and rooting through Google for an adequate substitute

Much of context-driven testing involves metacognitive questions:

- which test technique would be most useful for exposing what information that would be of what interest to who?

- what areas are most critical to test next, in the face of this information about risks, stakeholder priorities, available skills, available resources?

Questions/issues that should get you thinking about metacognition are:

- How to think about …

- How to learn about …

- How to talk about …

For example, the BBST® short on specification analysis includes a long metacognitive digression into active reading and strategies for getting good information value from the specification fragments you encounter, search for, or create.

Cognitive Process Dimension

The idea of the Cognitive Process Dimension is that we are characterizing the level, or depth, of learning.

Remember

You don’t have to understand something to remember it. For example, you can demonstrate that you remember a definition by recognizing it in a multiple-choice list of definitions or by writing it out. You might not know what the definition means, but you remember it.

Understand

If you understand something, you should be able to explain it in your own words, give examples of it, or recognize some implications of it.

Apply

If you know something well enough to apply it, you can actually use it in real-life circumstances. You can go beyond talking about it to getting something done with it.

Analyze

To analyze an idea usually means to break it down into components and understand the relationships among the components. For example, the concept of saving a file includes concepts of location on the disk (directory, file allocation table), size of the file (and how to tell whether it will fit on the disk), and the file format (text, rtf, MS Word doc format , etc.).

Evaluate

To evaluate an idea is to consider its positives and negatives, in its own terms or in comparison with other possibilities. For example, is this (e.g. is using this particular test design technique) a good idea? Is it the most useful one to apply to this situation? How would this idea be more useful and how would it be less useful than this other idea that we might apply to this situation?

Create

When you create new things (for example, new test techniques), you use old things as foundations. Another example: to create an effective test plan, you need to understand the relevant test techniques and management tactics well enough to decide what best belongs in the plan

Discussions

Share your take on the subject or leave your questions below.